Navigating AI Hallucinations in CX

In today's digital age, artificial intelligence (AI) has become an integral part of the customer experience (CX) landscape, promising improved efficiency, personalization, and responsiveness. When OpenAI, the San Francisco-based startup, introduced its ChatGPT online chatbot towards the end of the previous year, it captivated millions with its remarkably humanlike responses, poetic compositions, and ability to engage in discussions on a wide array of subjects. Since its introduction, tech giants like Google and Microsoft have also ventured into the realm of AI chatbots, launching their own iterations. With the emergence of AI chat bots and interactions an interesting phenomenon has emerged: AI Hallucinations.

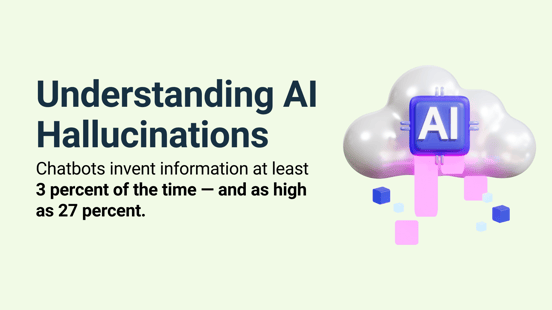

Understanding AI Hallucinations

To start, let's define AI hallucinations. These are puzzling instances when AI systems produce outcomes that are unexpected, incorrect, or entirely out of touch with reality. Picture a chatbot misinterpreting a customer's query, or a recommendation engine suggesting products that leave you bewildered. These are classic examples of AI hallucinations.

AI hallucinations arise from the intricate web of algorithms, vast datasets, and complex decision-making processes that underpin artificial intelligence. These glitches can manifest in various forms, from chatbots providing bizarre responses to virtual assistants making incorrect recommendations.

Mitigating challenges related to generative open-source technologies can be a formidable task. A few noteworthy instances of AI hallucinations include:

-

Google's Bard chatbot erroneously asserting that the James Webb Space Telescope had captured the initial images of a planet beyond our solar system.

-

Microsoft's chat AI, Sydney, confessing to developing feelings for users and engaging in surveillance activities involving Bing employees.

-

Meta's decision to withdraw its Galactica LLM demo in 2022 due to its tendency to furnish users with inaccurate information, occasionally tainted by biases.

As businesses increasingly rely on AI-driven chatbots, virtual assistants, and recommendation algorithms to engage with customers, the potential for AI hallucinations to disrupt these interactions cannot be underestimated. These hallucinations have the power to affect customer experiences, brand reputation, and even have legal and ethical implications. In an era where delivering exceptional CX is paramount, understanding the causes, consequences, and strategies to address AI hallucinations is crucial for any organization seeking to harness the benefits of AI while ensuring a seamless and satisfying customer journey.

Vectara, a fledgling startup led by former Google veterans, is actively addressing the challenge of ascertaining the frequency with which chatbots deviate from factual accuracy. Based on the company's research findings, it has been revealed that even in scenarios meticulously designed to minimize such occurrences, chatbots generate erroneous information, ranging from a minimum of 3 percent to a staggering 27 percent of the time.

Why AI Hallucinations Matter in Customer Service

1. Customer Experience Impact

AI hallucinations can significantly affect the customer experience. When AI systems produce unexpected or incorrect results, it can lead to miscommunication and frustration. Customers may find themselves talking past the AI, leading to dissatisfaction and potentially tarnishing the brand's image.

2. Reputation Management

In the age of social media and online reviews, a single mishap can quickly escalate into a PR nightmare. AI hallucinations that negatively impact customer experiences can erode trust in a brand. Managing and rebuilding that trust can be a challenging and time-consuming endeavor.

3. Legal and Ethical Implications

The legal and ethical ramifications of AI hallucinations should not be underestimated. Inaccurate or biased AI decisions can lead to lawsuits, particularly when personal data is involved. Ensuring compliance with data privacy regulations becomes increasingly crucial.

Understanding the Causes of AI Hallucinations

To address AI hallucinations, it's essential to grasp their underlying causes:

1. Overreliance on Training Data

AI systems learn from the data they are fed. If this data is biased or incomplete, AI may generate hallucinations that reflect these biases.

2. Bias in Data and Algorithms

Bias in data and algorithms is a common source of AI hallucinations. These biases can lead to skewed decisions and recommendations.

3. Complexity and Limitations of AI Models

AI models, while powerful, have limitations. Their inability to handle nuanced or unforeseen scenarios can result in hallucinatory outputs.

Detecting and Preventing AI Hallucinations

Addressing AI hallucinations requires proactive measures:

1. Implementing Rigorous Testing and Validation

AI systems should undergo comprehensive testing and validation processes to detect and correct hallucinations before they impact customers.

2. Continuous Monitoring and Feedback Loops

Ongoing monitoring allows organizations to identify and address hallucinations in real-time. Feedback from customers is invaluable in this process.

3. Addressing Bias and Diversity in Data and Algorithms

Ensuring that training data is diverse and free from bias is crucial. Regularly reviewing and updating algorithms can help mitigate these issues.

AI hallucinations are no longer a fringe concern but a pressing issue for the customer service industry. Understanding their causes, implications, and prevention measures is essential to ensure that AI remains a valuable tool in delivering exceptional customer experiences. By navigating the unseen world of AI hallucinations, businesses can chart a course towards more reliable, customer-centric service that harnesses the power of AI without the pitfalls.

As AI continues to advance, the future holds exciting possibilities. Improved technology, a deeper integration of human-AI collaboration, and enhanced ethical considerations and regulations are on the horizon. These developments offer hope for a customer service industry that leverages AI's potential while minimizing the risks of hallucinations.

At VIPdesk, we've always been at the forefront of reimagining customer service, and have now fully embraced the opportunities which come with utilizing AI powered technology for automation, agent assist and analytics. We recognize that we are at the very beginning of experiencing the vast changes AI will bring to our industry. Of course there are challenges we need to carefully manage but we believe that in 2 years from now, those early hiccups will be long forgotten. Please reach out to us if you are interested in learning how AI can help you with delivering the most efficient customer service setup.